Vector Embeddings

How AI Turns Words Into Numbers It Can Understand

Introduction

AI can translate languages, answer questions, recommend movies and even understand emotions in text.

But behind all of this intelligence, there is one concept that makes everything work: Vector Embeddings

You may not hear the term often, but embeddings are the foundation of how AI understands meaning.

They help models like GPT recognize relationships between words, compare ideas, and respond in a way that feels surprisingly human.

Why Do We Need Embeddings?

Humans understand language naturally. A single world like “apple” brings images, taste, color and memories.

But Computers? They only understand numbers.

Earlier methods tried to convert words into numbers using simple techniques, but they had a major flaw:

Old Methods Didn’t Capture Meaning

“king” and “queen” looked unrelated

“car” and “vehicle” were treated like strangers

“bank (river)” and “bank (money)” looked identical

AI needed a better way, a method to represent meaning, not just text.

That method is vector embeddings.

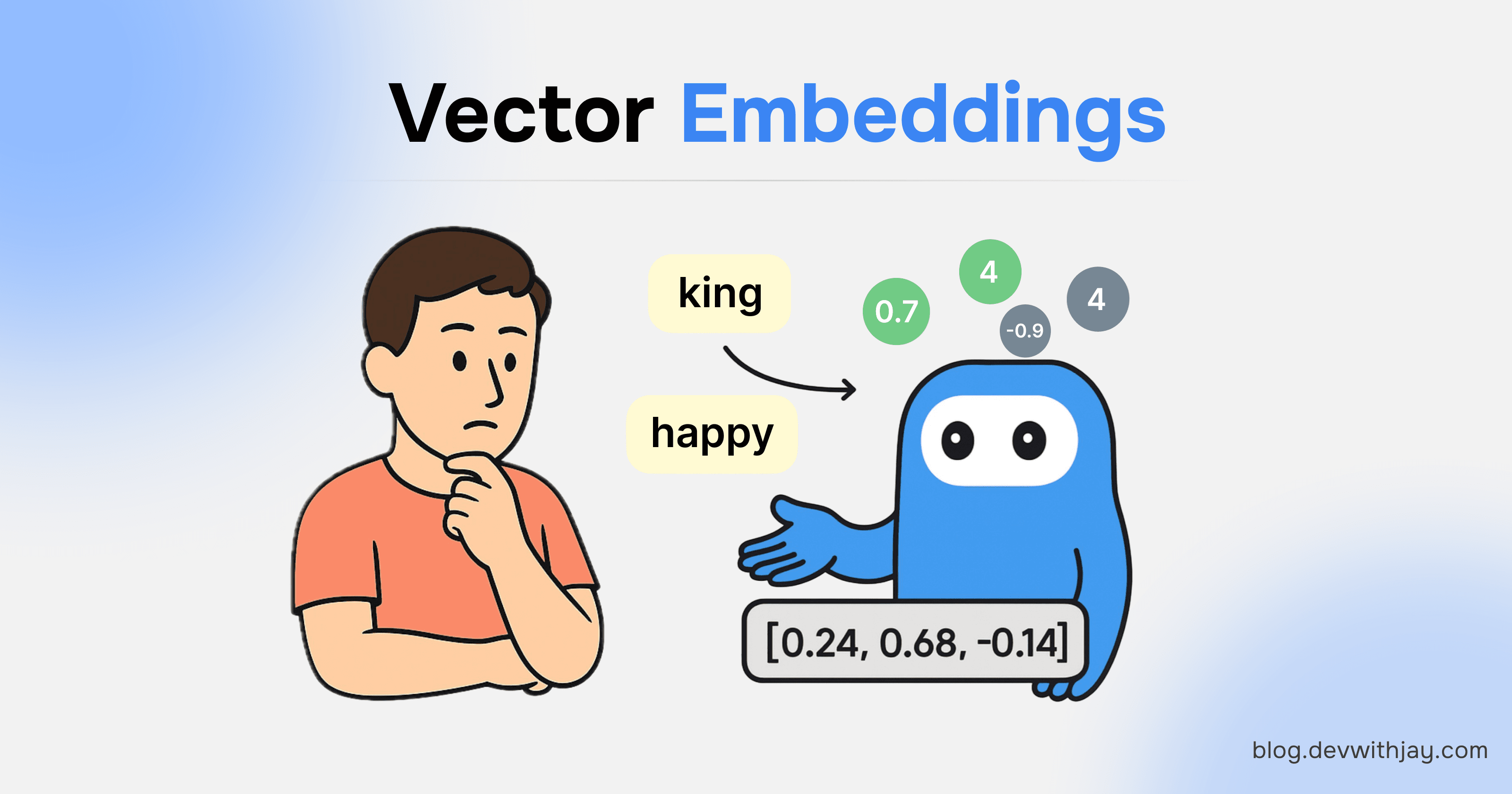

What are Vector Embeddings?

Vector embeddings are numerical representations that capture the meaning of words, sentences or even images.

Instead of storing words as plain text, AI converts them into vectors, long lists of numbers like:

The numbers store:

context

relationships

meaning

similarity

So AI doesn’t just see the word “king”.

It sees how “king” relates to “queen”, “royal”, “monarch”, “crown”, and so on.

How Embeddings Actually Work?

A good way to understand embeddings is to image a giant map.

Every word is a point on this map.

Words with similar meanings appear close together.

Words with opposite or unrelated meanings appear far apart.

Example:

This is how AI feels meaning.

Embeddings allow AI to reason like this:

“queen” is close to “king”

“doctor” is close to “hospital”

“sun” is close to “light”

“milk” is close to “cow”

Where Do Embeddings Come From?

Many people think embeddings magically appear, but they are created through Transformer models, the same architecture used in GPT, Gemini, Claude, and more.

A Transformer takes input, processes it, and produces meaningful output.

Transformers were introduced by Google in 2017 in a research paper titled: “Attention is All You Need.”

This paper changed everything in AI.

Transformers learn embeddings by reading massive amounts of text and discovering how words appear together, their context, relationships and patterns.

Working of Transformer

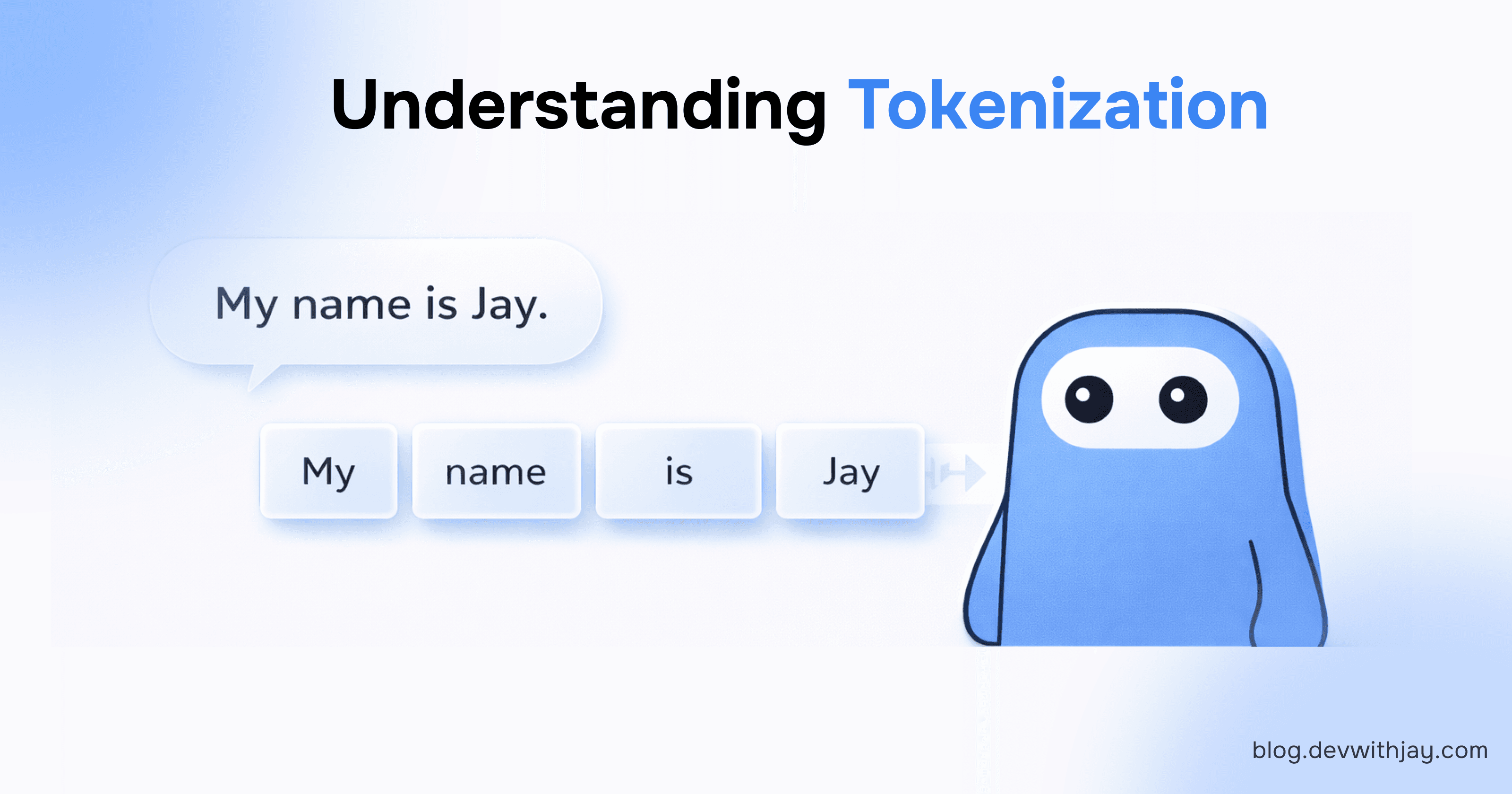

Step 1: Tokenization

AI Doesn’t read full words It breaks text into token(small units) like:

“Hello” → “Hel”, “lo”

“Jay” → “J”, “a”, “y”

“Running” → “run”, “ning”

Each token is assigned a number.

These numbers enter the embedding layer.

Step 2: Input Embedding Layer

This is where tokens into vector embeddings.

Each token becomes a coordinate in multi-dimensional space.

Words with similar meaning end up close together.

Step 3: Positional Encoding

Embeddings capture meaning, but not order.

Example:

“Dog bites man”

“Man bites dog”

Same Words. Different Meaning.

- Positional encoding helps the model understand word order.

Step 4: Self-Attention

This is where Transformer shine.

Self-attention allows the model to understand context:

“bank” near “river” → water

“bank” near “loan” → finance

This steps refines the embeddings based on their surroundings.

Step 5: Learned Meaning

After millions of sentences, the model learns relationships like:

Paris → France

Tokyo → Japan

Apple → Fruit

Tiger → Animal

This knowledge lives inside vector embeddings.

Where are Embeddings Used in Real Life?

Embeddings power almost every AI system we use today.

Search Engines

Search “best movies about space”

The system uses embeddings to find content with similar meaning, not just matching keywords.

ChatGPT

Your message → embedding

GPT response → based on embeddings of related ideas.

Recommendations

Netflix, Spotify, YouTube, all use embeddings to understand your taste.

Document Similarity

AI can detect:

“This paragraph means the same thing as that one”,

even if the words are totally different.

Image Search

With image embeddings, AI can find:

“pictures similar to this one”

“objects related to this shape”

Embeddings go far beyond text.

Why Embeddings Feel So Magical?

Embeddings give AI the ability to:

understand meaning

detect relationships

identify similarities

follow context

bridge languages

They make AI feel smart, even though, in reality, the model is just a powerful prediction machine.

AI does not think.

It predicts the next best output using patterns it has learned.

Types of Embeddings

Word Embeddings

Word Embeddings represent the meaning of individual words as vectors.

They help the model understand:

which words are similar(“happy” and “joyful”)

which words are related(“car” and “engine”)

which words are opposite(“hot” and “cold”)

These embeddings are useful in tasks like:

spell-check

suggestions while typing

simple search systems

sentiment analysis

Sentence Embeddings

Sentence embeddings capture the the overall meaning of an entire sentence, not just individual words.

For example:

“I’m feeling great today!”

“Today, I’m in a very good mood".

Both contain different words but have the same meaning.

Sentence embeddings make this clarity possible.

These are use in:

chatbots

document search

duplicate question detection(e.g. StackOverflow)

summary and Q&A systems

Sentence embeddings help AI understand context, tone and intention.

Document Embeddings

Document embeddings represent whole paragraphs, articles, reports, or long texts as single vectors.

They help AI:

search inside large knowledge bases

find related articles

retrieve the right information for ChatGPT(RAG systems)

group documents by theme

detect plagiarism or similarity

If you’ve ever used “semantic search” or “AI-powered search”, document embeddings are running behind the scenes.

Image Embeddings

Image embeddings convert images into numbers based on what the image contains.

For example, an AI sees:

a dog → “animal, four legs, fur, outdoors”

a car → “vehicle, wheels, metal, road”

a sunset → “sky, light, orange colors”

These embeddings allow AI to:

find similar images

label images automatically

match text with images (like “dog running”)

understand what an image represents in visual search

Image embeddings are used in systems like Google Photos, Pinterest and modern vision-language models.

The Future of Embeddings

Embeddings are improving rapidly.

The next generation will handle:

text

image

audio

video

emotions

real-world context

All inside a single vector space.

This will allow AI models to understand everything together, not in separate parts.

The more embeddings evolve, the more natural AI will become.

Conclusion

Vector embeddings are the hidden power behind modern AI.

They let machines turn text and images into numbers, not randomly, but meaningfully.

This is how AI understands relationships, compares ideas, and communicates like a human.

Embeddings don’t store words. They store meaning.

And that’s why they are one of the most revolutionary concepts in the world of AI today.

Want More…?

I write articles on blog.devwithjay.com and also post development-related content on the following platforms: